Automatic Evaluation Metric for Code with CodeBERTScore

In a generative AI era, the models could easily generate even code.

However, we usually have a standard code for everything when we work as a team or company.

There would be a case that the generated code by humans or AI would not meet our standards. But how do we check if thousands of codes exist?

Luckily, we can use the Automatic Code Evaluation Metric to examine all our code compared to the standard.

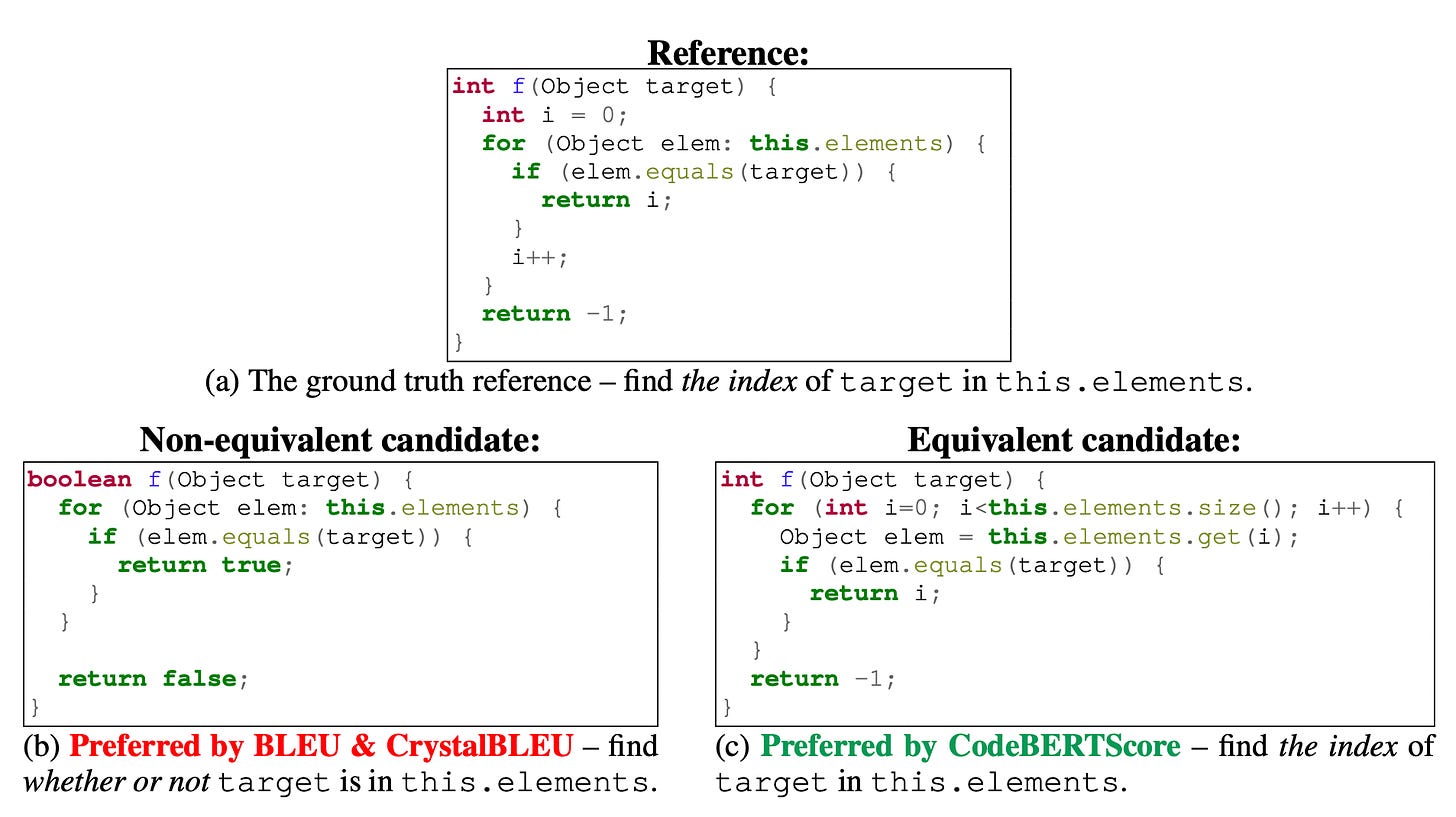

CodeBERTScore is the package to evaluate our code to see if the provided code is following the standard. It’s based on BERTScore, which is a text-generation metric evaluation model.

The usage could be implemented in various Programming codes, including Python. So, try it out yourself!

That is all for today! Please comment if you want to know something else from Machine Learning and Python domain!